Homework 5: Branch prediction, SMT, and hardware resources

Due: Wednedsay, 4/15 at 11pm

Overview

In this assignment, you will explore CPU design through a hardware resource lens in multiple ways. First, you will implement a hybrid branch predictor that chooses among five existing branch predictor implementations (including state-of-the-art predictors), without caring about hardware resources. Then, you will reason about how workloads on an SMT processor share hardware resources, as a lead-in to your final project. You will combine the idea of branch prediction and simultaneous execution by reading about multipath execution.

Part 1 and Part 2 can be done independently of one another. Part 3 combines the ideas from both.

Stencil code (important: update your repos!!)

Right before this assignment went live, we submitted pull requests to your github classroom copy of gem5. Either merge the pull request into your working copy, or, if not working from github classroom, make sure you’ve pulled the latest changes to the assignments repo.

The config files for this assignment are found in configs/assignment/hw5 and the workloads are found in tests/test-progs/microbench-riscv and tests/test-progs/smt-branches.

Submission

In part 1, you will modify code files that get autograded (hybrid.cc and hybrid.h). In part 2, you will modify files that we will build and run, but that don’t have specific autograder tests associated with them (due to the open-ended nature of what we’re asking you to do). You’ll be responding to conceptual and reflection questions along the way, which you should include in a single PDF in your submission.

Part 1: Hybrid Branch Predictor

In class, we learned about a tournament branch predictor, which, for each branch, chooses between two predictors. One simple way to implement this while saving hardware resources is to keep an extra 2-bit saturating counter for each branch. If the counter is, for example, 00 or 01, the branch uses the global predictor (based on the outcome history of all recent branches). However, if the counter is 10 or 11, the branch uses the local predictor (which itself is a 2-bit saturating counter where the bits encode Strong/Weak Taken/Not Taken, as we saw in class). Once the branch outcome is known, the saturating counter is updated towards the more accurate predictor (for example, if the saturating counter is 01, and the global predictor ends up incorrect but the local predictor ends up correct, the saturating counter is updated to 10, which means the branch will switch to using the local predictor on the next iteration. In the opposite case, the saturating counter is updated to 00, which means the branch will continue using the global predictor and will require two mispredictions of the global predictor to switch to the local predictor).

The idea behind this scheme is that each branch adapts to using the branch predictor that has worked well for it. Using 2-bit saturating counters in this way cuts down on transistor usage and also hardware complexity, while still allowing for pretty stark gains in accuracy over a local predictor. In CS1952y homeworks, however, we are somewhat unburdened by such constraints as “transistor usage.” Let’s use the full simulation capabilities of gem5 to explore a much more souped up hybrid branch predictor that chooses between 5 predictors for each branch.

The stencil code, found in src/cpu/pred/hybrid.{cc, hh}, holds five predictors that already come with gem5. The configuration in src/cpu/pred/BranchPredictor.py (which you will not be changing) initializes each predictor with the default parameters. To work with the gem5 CPUs, a class that inherits from BPredUnit needs to implement four methods: lookup, update, updateHistories, and squash. You can see how these methods are called by a branch predictor unit in src/cpu/pred/bpred_unit.cc. Most importantly, when lookup is called, each branch predictor can create a pointer to some sort of history object for each branch, which the caller code then passes back to update the predictor on the next action (update, speculative update, or squash). The provided HybridBP currently wraps a local predictor and shows how this data structure is created and destroyed.

If you run the provided code, you should see the same branch predictor accuracy (system.cpu.branchPred.condIncorrect / system.cpu.branchPred.condPredicted in stats) for the hybrid BP as the local BP (to switch between branch predictors in the config script, use the flag --branchpred).

Modify the HybridBP class to use all five predictors, instead of just the one. Each implementation of the four BP methods should call that same method on all five predictors, to allow them to keep track of internal state (similar to how each method makes the calls on localBP) – essentially running the five predictors in parallel. In lookup, your implementation should return the prediction of the branch predictor that has been the most accurate so far for that branch, using heuristics and data structures of your choosing. lookup should increment the stat counter for whichever branch predictor was used to return the result for the given branch at the given moment. This means that the five statistics {local, tournament, bimode, tage, perceptron}Preds should add up to condPredicted in the end.

There’s a lot of flexibility in how to implement this – we’ll be looking to see that you’re actually running the branch predictors in parallel and that you’re returning the branch decision based on the branch history. We’re also looking for you to be strategic in handling branches that do not yet have an established history.

Using the bp.py config, run each of the predictors (hybrid, and the five provided as standalones) on the CCa, CCm, and CRm benchmarks (the descriptions of them are given in the Microbenchmark repository that we pulled them from). Which predictor performs the best? Is this surprising?

A tournament of a different kind

For the past several years, a “CBP” (Championship Branch Prediction) competition has been hosted alongside ISCA (the International Symposium on Computer Architecture). In 2025, the first-place team received an award of $1000 (you can see everyone’s papers here). One kicker: the branch predictors must use a budget of 192 kB total. Certainly, the hybrid branch predictors you’re working on don’t qualify.

However, even though we’re being greedy with resources (and even though we don’t have $1000 to offer to anyone), we can still have some fun of our own. When you submit to the autograder for this assignment, your BP accuracy will be ranked against everyone else’s, and you’ll be able to access a leaderboard (where you get represented by a pseudonym of your choosing) to see how your predictor stacks up against everyone else’s. This is just for fun – winning this “competition” might get you an extra credit point or two, and we might display a badge with your chosen pseudonym on the course website, but your submission will be graded independent of your rank.

Submission and written response

Submit your hybrid.hh and hybrid.cc files, as well as answers to the following questions:

A: As we’ll see in class, it’s getting a bit harder to cram more and more transistors on a board, due to manufacturing capabilities, quantum effects at small scales, and power/energy constraints (justifying CBP’s restriction of 192 kB!). Nevertheless, scientists and engineers keep coming up with new technologies to surmount what seemed impossible mere years ago. Let’s say we come up with a new technology that allows us to increase the complexity of our circuitry in a way where we can actually afford to implement our hybrid BP in hardware (still at some power/energy/space/$$$ cost). Do you think it would be worth it to add this technology to an existing OOO speculative superscalar CPU, or would the technology be better spent trying to improve throughput/latency in some other way? Regardless of your opinion, in your answer, give at least two examples of how a theoretical large increase circuit complexity could get around some of the limits of ILP we’ve seen in class, and be creative (don’t just say “larger cache” or “more functional units.” Can we move things around? If we wanted to make just one or two things in our CPU circuit fast, what would they be?)

B: Variations on the TAGE predictor (such as LTAGE, used in this assignment, or LTAGE with statistical correlator, provided by gem5) are considered to be the ones to beat in modern branch predictor competitions (a real thing). This might not match your observations when running the benchmark programs. Based on the TAGE paper (feel free to find other sources that describe the predictor instead, and cite them), where do you think this mismatch comes from? Your answer might consider the provided benchmarks, the parameters available to configure TAGE, your understanding of gem5, or any other ideas you think of, but should demonstrate understanding of the underpinnings of the TAGE predictor.

Part 2: Hypotheses on SMT

In this part of the homework, we want you to engage with OOO processors in more depth, by thinking more deeply about how all of the hardware resources (ROB entries, renamed registers/reservation stations, functional units, load/store queues, caches, etc) are utilized in a simultaneous multithreading context. A meta-goal of this section is to get you to think about how you can design follow-up questions that explain effects you see when you evaluate hypotheses – which is exactly what you do should do in your final project!

First, consider a single workload W running on a single hart of a processor, which completes in some number of cycles c_single. If we want to run W twice simultaneously on two harts of the processor, what should the lower bound and upper bounds be for c_threaded, or the cycles it takes to complete the two Ws be?

Check your work

In the best case, the two Ws run complete in parallel, which means that the processor is able to complete both in c_single cycles. In the worst case, the two Ws can't make any use of parallelism at all, which means the processor will take 2 * c_single cycles.In tests/test-progs/smt-branches, we’ve given you two programs which run almost the same workload, except that the branching behavior of one program is very uniform, and the other is nonuniform. The config file base.py allows you to run one workload on a standard OOO processor with no harts, and the config file smt.py allows you to run two workloads on a standard OOO processor with two harts. Before running the workloads with these configs, make a hypothesis of what you expect to observe for the relative cycles between each pair of these runs. In particular, which of these cycle ratios, which measure the overhead of doubling the workload on an SMT processor vs the single-threaded single workload, do you expect to be the best (lowest): c(U + U) / c(U); c(N + N) / c(N); c(U + N) / [(c(N) + c(U)) / 2]?

base.pywith uniform workload (U)base.pywith nonuniform workload (N)smt.pywith each hart running a uniform workload (U + U)smt.pywith each hart running a nonuniform workload (N + N)smt.pywith one hart running a uniform workload and one hart running a non-uniform workload (U + N)

Now, run the experiment (saving the stats.txt files for each run to refer back to). What do you observe?

Supplemental analysis

Hopefully, the results are at least somewhat suprising – you probably noticed that one pair of workloads run on an SMT processor had a slightly larger overhead than the other pairs. But why? How that pair of workloads shares hardware resources probably has something to do with the answer, but how can we know for sure? If you take a look at your stats.txt files, you’ll see that the SMT runs are now reporting some numbers per-hart (the stat names will end in 0 or 1), but which ones explain the story? There are a few things stopping us from knowing for certain:

- The workloads are fairly simple. Maybe using workloads that put more pressure on the processor would help expose some effects.

- We’re using the default OOO processor configuration. Maybe the resources are too plentiful to see the true detriments of sharing. Can we play around with how resources are allocated in order to gain an understanding?

- The stats file gives us a lot of information, but some of it isn’t broken down to the hart level. Maybe better stats could help us understand what hart is using what resources.

First, make a secondary hypothesis on how SMT hardware sharing might explain the results you observe. Make sure to be specific! Try to narrow it down to a specific resource or a specific observable effect. If you’re not sure where to start, you can read about the structure of the O3 processor or check your stats.txt file to try to find a stat that is subtantially worse in one SMT workload over the other two.

Now, make a modification to the provided config file(s) and/or an existing hardware unit (such as the ROB) to provide per-hart (thread, in gem5 terminology) stats reporting in a way that helps you test your hypothesis. It’s okay if your secondary hypothesis ends up being disproven. We’re looking to see how you did your actual exploration. We’re also not looking for you to be exhaustive here. Just explore one (plausible) secondary hypothesis, and instrument gem5 to help you analyze it.

Submission and written response

In your submission, turn in any modified config file(s) and implementation file(s). Also explain your process:

C: What was your secondary hypothesis? What config changes or stats did you add to try to measure the hypothesis? What did you find? Does this support or disprove the hypothesis? What are the caveats of your conclusion (if you claim your hypothesis was supported, how do you know for sure? If it’s disproved, what would you measure as a follow-up)?

Part 3: Alternatively speculative execution (written only)

Let’s combine the ideas from Part 1 and Part 2. Multipath execution is a special offshoot of speculative execution, where instead of speculatively executing a single predicted path, the processor explores both sides of a branch simultaneously in some way. This threaded multipath execution (TME) paper uses SMT to accomplish this. Answer the following:

D: TME treats unused SMT contexts (harts) as “slack” capacity. In an optimistic world, this implies that we get speculative execution for “free” without even needing a branch predictor (in a realistic world, the paper discusses how implementing threaded multipath execution requires extra hardware, including a “branch confidence predictor” to choose a branch to fork). Given what you learned in part 2, what are the caveats of this claim? Based on the results presented in the TME paper, would you rather use TME or a non-SMT processor with a performant branch predictor?

TME only ever forks on the path of execution that it considers to be “primary.” Consider this alternative approach (which we loving call P3, or the Paranoid Prediction Processor), where we spawn fork on every thread (as long as hardware resources allow – this introduces a new kind of structural hazard, similarly to how TME bounds the extra harts it uses), and answer the following:

E: In an OOO implementation of the P3, what needs to happen on a branch (assuming sufficient harts still exist for forking)? Be detailed about the information that the newly spawned hart needs to track, and make sure you account for control flow correctness of multiple sequential branches and data flow correctness when multiple harts are executing in parallel and when a hart needs to be squashed.

Quiz prep

These are the sorts of questions you might expect to appear on the quiz:

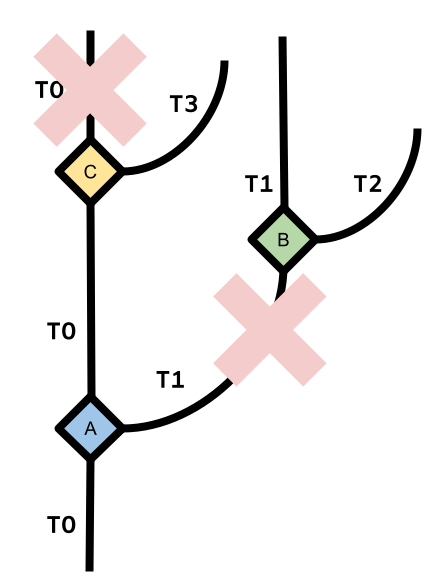

- Given two workloads and a CPU setup (single-core vs. SMT), diagram how the workloads run (i.e. which workload is using which hardware resource in which cycle); similar to P&H 6.9

- Given a snapshot of the processor state (registers, reservation stations) of an OOO (Tomasulo’s algorithm) CPU at a given cycle and the next instruction to issue, indicate what the processor state changes to after the instruction is issued. H&P (not P&H) exercise 3.15 is similar.

- Same as above, but with a speculative processor (processor state now includes ROB)

- Same as above, but with a superscalar/multi-issue processor. An offshoot of this is H&P exercise 3.9. It uses a rename table (figure 3.52) instead of using the ROB/reservation stations to store data directly (the abstraction we used in class), but it illustrates with inter-bundle dependence tracking is important for superscalar processors.

- Describe (or rate on a scale) the tradeoffs between dynamic (pipelining, OOO, speculation, superscalar) vs static (compiler, VLIW) approaches to processor efficiency (H&P exercise 3.14 gives a quantitative view into this)

- Explain the role of the BTB and the RAS in a speculative processor; alternatively, given an existing BTB (indexed by the PC) and branch prediction outcome, indicate the next PC the processor chooses.

Also take a look at P&H exercises 4.28-4.31. They ask a lot of nice questions about modern ILP techniques.

Changelog

This will be updated whenever any clarifications have been added to this assignment. See also the FAQ on Ed!